Recording and transcribing interviews is just the beginning. AI can now score candidate responses in real time, objectively, consistently, and without a lunch break. The big question right now is whether “objective” is really what we think it is.

Picture this: a candidate finishes their answer to “Tell me about a time you led through ambiguity.” Before the interviewer has even started note-taking, an AI system has already parsed the response for structure, STAR-method adherence, keyword density, speaking pace, filler word frequency, and sentiment alignment with the job description. A score appears. A recommendation populates.

This isn’t future ideology. It’s today at companies deploying the latest generation of interview intelligence platforms. And it raises questions that go well beyond whether the technology works.

What interview AI actually does

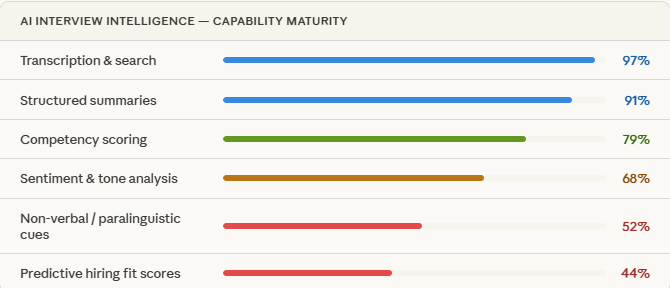

The category is moving fast. Two years ago I was amazed at a demo that showed basic video recording with searchable transcripts. That’s child’s play to the sophistication seen in current technologies. Here’s what modern platforms can do in a single interview session:

The accuracy at the top of the list is really impressive. Transcription from tools like Otter, Fireflies, and built-in ATS tools has become reliable enough that teams are retiring their note-taking activities. Summaries are useful. Searchable recordings let hiring teams align faster.

It’s when you move down the list, toward sentiment, paralinguistics, and predictive fit, that the science gets shakier and the ethical terrain gets rocky.

The core goal – Eliminate interviewer bias and standardize evaluation. Surface the best candidates regardless of who happened to be in the room that day or how good a lunch they had.

The case for AI scoring (and it’s a real one)

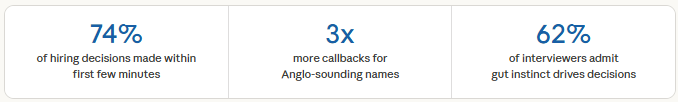

Let’s be fair to the technology before we put it on trial. Human interviewers are not neutral arbiters. The research on interviewer bias is damning: we prefer candidates who share our backgrounds, penalize non-native speakers for accent, reward extraversion regardless of job relevance, and make up our minds within the first 90 seconds.

Against that backdrop, the appeal of a system that evaluates every candidate against the same criteria that doesn’t care if you went to a rival university or that can’t be charmed by a firm handshake is obvious. Structured AI scoring promises consistency at scale. It lets smaller companies apply interview intelligence that Fortune 500 HR teams took decades to build.

For high-volume roles (support centers, logistics, retail, etc.) the operational case is even stronger. Reviewing 400 recorded interviews with AI assistance versus scheduling 400 live panel interviews isn’t a philosophical debate. It’s a resource reality.

The case for:

- Reduces document human biases

- Consistent scoring across panels

- Enables asynchronous review

- Creates auditable decision records

- Scales to high-volume hiring

- Surfaces overlooked candidates

The case against:

- magnifies historical hiring biases

- Penalizes neurodivergent candidates

- False precision on sentiment analysis

- Ignores authentic self-expression

- Regulatory risks

- Damages candidate experience

Why candidates might (rightfully) hate it

Here’s where the conversation gets uncomfortable for hiring teams. Because candidates know. They can see the recording indicator. They know an AI is watching and it changes how they show up.

There’s a performative distortion that kicks in, particularly in video interviews where facial analysis is in play. Candidates who know their eye contact, nodding frequency, and smile rate are being measured will optimize for those signals, not for authentic communication. You don’t get to see who they are. You get to see who they think the algorithm wants.

The coaching economy – An entire cottage industry has emerged teaching candidates how to game AI interview scoring, from specific STAR-method sentence construction to pacing speech for sentiment analysis tools. If the signal can be coached to, it stops being a signal.

There’s also the bias laundering problem. AI systems trained on historical hiring data inherit the biases embedded in those decisions. If your top performers historically looked, spoke, and interviewed a certain way and your AI learns to score against that pattern, you haven’t removed bias. You’ve given it a lab coat and called it objective.

The algorithmic accountability issue is real too. A candidate rejected by a human interviewer can at least have an idea of what went wrong. A candidate rejected by an opaque AI scoring system has no legible feedback, no recourse, and often no transparency that AI was involved at all. Several jurisdictions are now moving to require disclosure ( New York City’s Local Law 144 being the highest-profile example) but compliance is still inconsistent.

And then there’s the neurodiversity dimension. Candidates with autism, ADHD, social anxiety, or other conditions may present differently across the metrics these systems measure, like eye contact, processing speed, affect display, filler word usage, without any correlation to job performance. Designing AI scoring systems without explicit accommodation is not just an ethical failure. In many jurisdictions, it’s a legal exposure.

The transparency question organizations avoid

Most organizations deploying interview AI have not answered the basic question candidates deserve to have answered: what is being measured, how is it being weighted, and what role does that score play in the decision?

Telling candidates a session will be “recorded for quality purposes” is not the same as telling them AI will score their vocal sentiment. These are meaningfully different levels of disclosure, and the gap between them is where trust erodes.

What good looks like – The organizations doing this well treat AI interview scoring as decision support, not decision replacement. Human reviewers see the AI assessment alongside the transcript, not instead of it. Scoring rubrics are disclosed to candidates upfront. Accommodation processes exist and are actively offered. And the system is regularly audited for disparate impact across demographic groups.

The regulatory horizon is arriving faster than most HR teams realize

The EU AI Act explicitly classifies AI systems used in recruitment and candidate evaluation as high-risk, triggering requirements for transparency, human oversight, and bias auditing. The US EEOC has issued guidance on algorithmic hiring tools and disparate impact liability. Canada, the UK, and Australia are developing their own frameworks.

Organizations that adopt interview AI without building the compliance and governance infrastructure around it are accumulating a liability that will eventually come back to them. The question is whether it lands as a regulatory action, a discrimination claim, or a hit on company reputation.

So where does this leave us?

Interview AI is not going away. The productivity gains are too real, the consistency benefits are too valuable, and the technology will keep improving. But the version of this technology that makes hiring genuinely better, both for companies and candidates, requires something the field hasn’t always prioritized: honest design.

Honest about what the AI can and cannot measure. Honest about where the training data came from and what biases it may have encoded. Honest with candidates about what’s happening to their interview data and how it influences their outcome. And honest about the fact that “objective” is not a property of an algorithm. It’s a property of the choices made in designing, training, and deploying it.

The technology gives hiring teams a mirror. What they do with what they see in it is still a human decision.

The goal was never to remove humans from the loop. It was to make the humans in the loop more consistent, more fair, and more aware of their own blind spots

What’s your organization’s approach to AI in the interview process? Are you disclosing it to candidates? Have you audited for disparate impact? I’d genuinely like to hear where teams are on this as I believe the range of practices is wider than most people realize. Start the discussion by leaving a comment!

ES Talent Solutions helps organizations navigate the intersection of recruiting strategy and emerging technology. Want to discuss how agentic AI could transform your talent acquisition function? Contact Eddie Stewart at estewart@ESTalentSolutions.com. I’m always happy to talk with fellow leaders about building recruiting functions ready for the future.

0 Comments

Writing “Skills-First” Job Descriptions: How to Define Outcomes Instead of Requirements – Part 2

Ready to drop legacy requirements? Use our 4-step framework to write outcome-oriented job descriptions that measure performance, depth, and capability.

Writing “Skills-First” Job Descriptions: How to Define Outcomes Instead of Requirements – Part 1

Stop treating job descriptions like grocery lists. Learn how to write a skills-first job description focused on performance outcomes over inputs.

The Shift to Skills-Based Hiring: Why Major Companies Are Dropping Degree Requirements

Discover why Google, IBM, and 26 states are dropping degree requirements. Explore the data behind skills-based hiring and how to audit your ATS.